The question landed somewhere between the appetizers and the first round of real disagreement: With the rise of AI, what does mastery even mean?

Around the table, the reaction wasn’t panic so much as recognition. A composer pushed back first: mastery isn’t going anywhere, but it’s shifting. Presume everyone can quickly learn a sophisticated skillset — when that’s the baseline, mastery becomes what you do to excel beyond it.

No one fully agreed. No one dismissed it, either.

This is what AI conversation sounds like when people aren’t performing it. And it’s very different from the one happening in public.

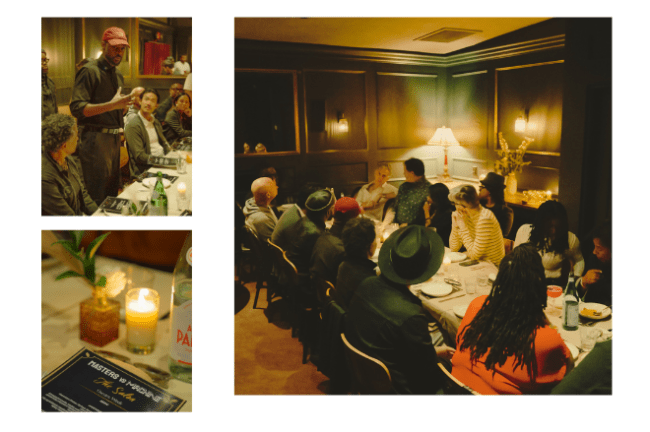

Earlier this month, I attended Masters vs Machine, a private, off-the-record AI dinner hosted by music producer Roahn Hylton. (He produced it in partnership with AI & The Culture and Judene Small, a partner in a16z’s Cultural Leadership Fund.) The evening created what’s almost impossible to find in the wild: honest conversation around what people really think about AI.

Hylton told me he created this space because he couldn’t find it anywhere else.

Rohan founded Masters vs Machine out of frustration. At the time he was the chair of the Grammys’ songwriters and composers branch and saw that for all the questions around AI, Grammys policy on it was “touch and go.” Artists wouldn’t talk about it, but in the studio “the first thing they’re doing is cracking open AI.”

That was a problem. “You couldn’t keep up with what was going on, which meant that there was real deal flow happening in the space above everybody’s heads,” he said. “And people were talking about creatives, but creatives weren’t welcomed to the conversation.”

That led him to create Masters vs Machine as a series of spaces where “creatives are able to have the conversation around AI. We need to be able to have a table that we set where the technologists and my friends in DC, my policy friends, are able to tap our brains on what these technologists are saying, and then vice versa.” (The next one is planned during the Cannes Film Festival.)

At the dinner in West Hollywood, Hylton assembled an industry cross-section: studio executives, producers, technologists, investors, and working artists across film, television, music, and AI. We gathered at Carmel restaurant on Melrose, where Hylton is a partner, in a back room where Mediterranean dishes arrived family style. The food was excellent, but it never had the chance to pull focus. This was an evening where conversation dominated.

What the room actually talked about

It shouldn’t be this hard, right? But it is. If you’re an AI supporter, there’s no space for doubt; if you’re an AI skeptic, curiosity can be interpreted as treason. It’s why so many AI panels skew declarative or defensive because the risk of fallout is intense.

In private, you can be messier and more honest. Far more useful.

What emerged from the evening wasn’t consensus. It was a set of tensions the industry hasn’t yet figured out how to hold in public. Here’s how the discussion broke down.

Is mastery still relevant?

The conversation opened with a fundamental question: If anyone can generate content, what happens to expertise?

The instinct, especially in creative fields built on apprenticeship, is to assume erosion. If tools collapse the time it takes to produce something, then the value of time spent learning should collapse with it.

But that wasn’t how the table saw it.

What emerged was a reframing. Mastery isn’t disappearing, but it may be relocating. As tools become more accessible, technical barriers fall but the outcomes don’t equalize. When everyone has access to the same capabilities, the differentiator shifts toward judgment: what to make, why to make it, and how to shape it.

Several people compressing the traditional learning curve. The 10,000-hour model may be giving way to shorter cycles of learning applied across projects, with progress measured by iteration and output.

That doesn’t eliminate mastery, but it changes how it’s developed and recognized.

Takeaway: Mastery still exists, but it’s being redefined in real time.

When everyone can create, who stands out?

As AI lowers barriers to entry, the friction between idea and execution shrinks. But access doesn’t guarantee distinction.

Several people pointed to YouTube as a precedent: a platform where anyone can publish, but only a fraction break through. As volume increases, so does competition. Democracy doesn’t eliminate hierarchy.

There was optimism: It brings new creators who couldn’t have accessed traditional systems. We see new forms and a broader range of voices.

There was also realism: More participation produces more attempts, not more success. In that environment, the ability to stand out becomes more important.

Takeaway: AI expands participation, not necessarily the odds of success.

The real anxiety is economic, not creative

Public conversation around AI tends to center on creativity and what it means for art, authorship, and originality.

In the room, the conversation kept returning to something more immediate: Money.

Job loss. Shrinking budgets. The erosion of a middle class. The uncomfortable understanding that while new tools create opportunity, they also compress timelines and reduce the need for labor.

This wasn’t theoretical. It was practical. People spoke about short-term decisions, including whether to take AI-assisted work, whether to adopt tools that could replace parts of their roles, whether there will be a place for their skills in the next cycle.

The tension is immediate: adapt or fall behind, but adapting may accelerate the very changes that make long-term stability more difficult. Awkward, and very real.

Takeaway: The biggest fear isn’t what AI makes, but what it replaces.

Public position vs. private behavior

One of the evening’s clearest throughlines was the gap between what’s said publicly and what happens in practice.

AI is often framed in absolute terms: a threat to be resisted or a tool to be embraced. There’s little room for ambiguity. Privately, the behavior is more complicated.

Some artists who speak out against AI also explore it behind the scenes — and not necessarily out of enthusiasm. When the cost of ignoring these tools is increasingly visible, it feels like a competitive necessity.

Different incentives are at play. Reputation, labor dynamics, and community expectations shape what can be said even as the day-to-day reality of making work pushes in another direction.

So the conversation splits. Not because people aren’t thinking about AI, but because they’re thinking about it in ways that don’t easily translate.

Takeaway: What the industry says about AI doesn’t align with what it does.

Speed is the destabilizing force

More than any single capability, what stood out was pace. One participant described a problem scoped as a six- to 12-month effort, now resolved in a week.

That kind of compression changes expectations. It also destabilizes planning. Timelines shorten, decision cycles tighten, and the window between experimentation and adoption narrows.

The pace even outstrips the systems designed to manage it like contracts, workflows, and shared understanding.

Takeaway: AI is changing what it means to operate at speed.

No clear rules and none incoming

When the conversation turned to policy, the tone became blunt: Regulation doesn’t have a clue.

Lagging regulation isn’t unusual for emerging tech, but this is a gap measured in light years. Even at the industry level, there’s no shared definition of what qualifies as AI, how it should be categorized, or how it fits into frameworks like guild agreements.

Without shared understandings, studios, labor organizations, technologists, and investors operate with different assumptions, timelines, and incentives. It feels like consensus can’t catch up.

A structure may emerge, but no one’s counting on it.

Takeaway: For the forseeable, the industry will operate without a coherent rulebook.

Creativity may be reframed

One point drew broad agreement: Creativity isn’t the variable at risk.

AI can generate, assist, and accelerate. It can reduce friction and expand what’s possible. But while it can be a powerful extension of creators’ capabilities, it can’t replicate them: taste, collaboration, emotional intuition, recognizing what resonates.

However, its use carries inherent risk. Used carelessly, it can flatten work and strip away specificity. Designed to produce at scale, it runs counter to what makes creative work distinct.

That balance isn’t solved. But it’s where the conversation is headed.

Takeaway: We know creativity survives, but not how it will evolve.

The biggest gap: Where the conversation happens

AI is already reshaping how work gets made, financed, and distributed. That’s not a future scenario. It’s happening now, unevenly and often invisibly. And we lack the ability to talk about it honestly in public.

This isn’t unique to entertainment. It’s how we’ve learned to engage with anything that feels destabilizing. But it’s a problem when the subject is something actively reshaping the system itself.

Whether or not the industry chooses to engage in that middle space, the work is moving forward.

The only question is who gets to participate in shaping it.The most important insight of the night wasn’t about AI itself. It was about where and how these conversations take place.

It’s great that discussions like the one Hylton hosted are happening, where people can speak without immediate consequence.

Public conversation remains a problem.

We may as well talk about this. AI is already embedded in the way our work is conceived, developed, and produced. Its effects are uneven, unresolved, and deeply interconnected with creativity, economics, labor, and control.

Takeaway: AI doesn’t lack for conversation but it’s in dire need of visible, collective thinking.