Listen and subscribe: Apple | Spotify | Wherever You Listen

Sign up for our daily newsletter to get the best of The New Yorker in your inbox.

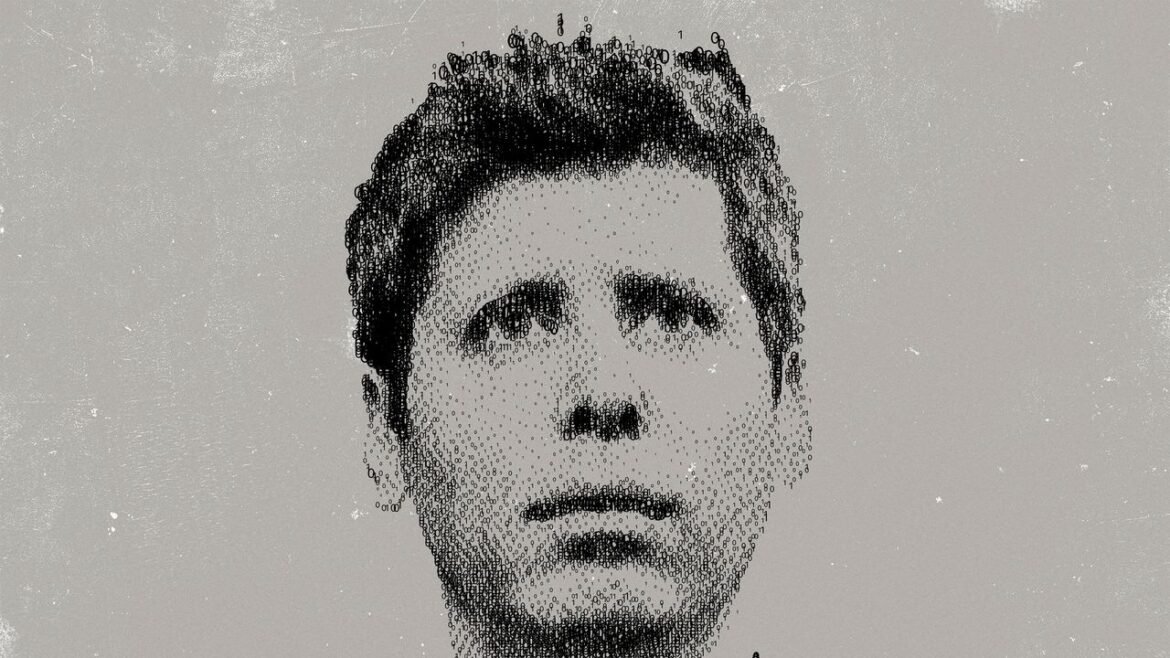

At the end of February, OpenAI’s C.E.O., Sam Altman, made headlines by swiftly cutting a deal with the Pentagon for his company to replace Anthropic, which had balked at the Trump Administration’s bid to use its A.I. technology to power autonomous weapons and aid in mass surveillance. Days earlier, Altman had publicly supported Anthropic’s position in the dispute. Altman’s rise to power and his founding of OpenAI were predicated on placing safety above other concerns in developing artificial general intelligence. Why did he change his stance on such a fundamental issue? The New Yorker writers Ronan Farrow and Andrew Marantz spoke with Altman multiple times and interviewed more than a hundred people for their investigation into the leader of one of the most powerful companies in the world, comparing Altman to J. Robert Oppenheimer. Although there is no smoking gun in Altman’s hand, the writers find that persistent allegations about his conduct underscore the danger of entrusting him to wield such vast power over the future.