By: Brett Axler, Casper Choffat, and Alo Lowry

In the three years since our first Live show, Chris Rock: Selective Outrage, we have witnessed an incredible expansion of our live content slate and the live operations that support it. From modest beginnings of streaming just one show per month, we are now capable of streaming over nine shows in a single day, reaching tens of millions of concurrent members. This post pulls back the curtain on the Live Operations teams that enable this rapid scale.

Humble Beginnings

In March 2023, the engineers who built Netflix’s first live streaming pipeline also operated it. There was no dedicated operations team or formal command center. All of our incident response playbooks were written for SVOD, and SLAs were not designed for the speed of live. For the first live shows on the platform, the engineers who designed what is described in earlier parts of this series monitored dashboards on laptops, coordinated over Slack, and troubleshot in real time while millions of members watched.

The physical setup matched the operational workflows: improvised. Temporary control rooms were put together in conference rooms. For larger events, Netflix rented third-party broadcast facilities, hardware control panels, multiviewers, and communication panels — the kind of infrastructure that established broadcast networks had built over decades. Every show was a team effort. Engineers and leadership at all levels were involved in every event. Each live show, regardless of size, was a massive effort to launch.

Last month, in March 2026, Netflix streamed the World Baseball Classic live to members in Japan. 47 matches over two weeks, with peak concurrent viewership exceeding 9.6 million accounts for a single game, operations running 24/7 from permanent facilities in Los Gatos and Los Angeles, with international coverage extending to Tokyo. In March alone, Netflix launched approximately 70 live events. That is three events shy of the total number Netflix streamed live in all of 2024. The technical systems that make this possible have been covered in detail across this series. What hasn’t been told is the operational story: the people, procedures, and facilities Netflix built to run those systems in real time, under pressure, with no ability to pause or roll back.

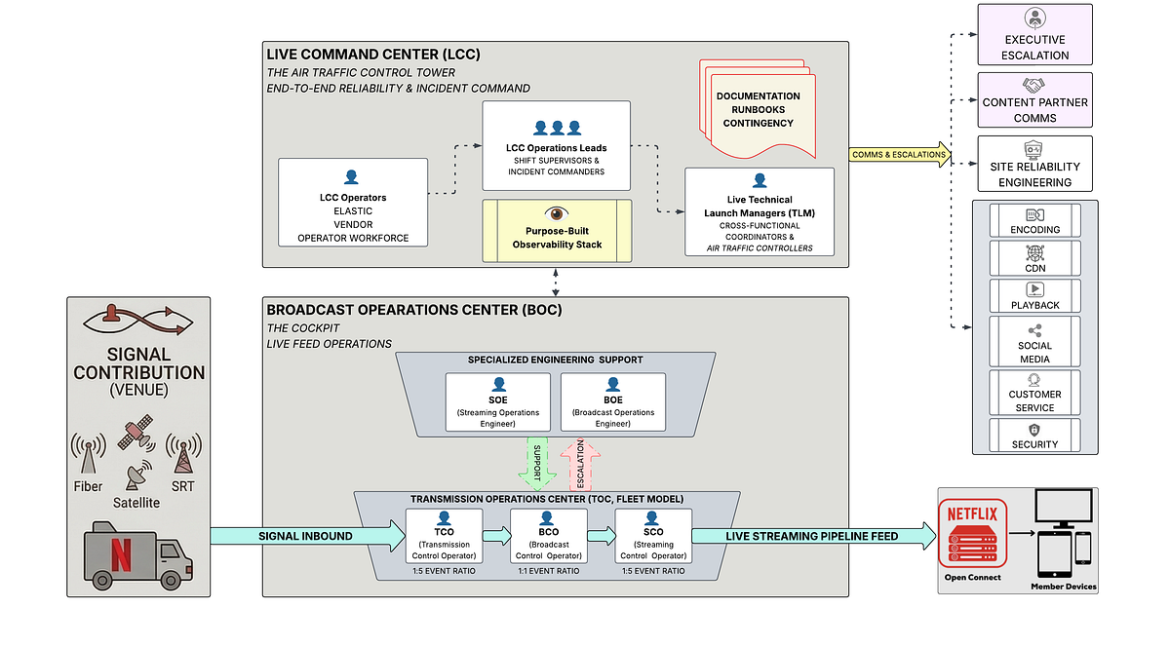

The Architecture of Live Operations

The Architecture of Live Operations: Evolving the Broadcast Operations Center

When a technology company transitions into live broadcasting, it faces a unique challenge: blending traditional broadcast television practices with massive-scale live-streaming engineering. At the heart of this intersection is the Broadcast Operations Center (BOC).

The BOC serves as the critical “cockpit” for live events. It is the physical command center where a fully produced video feed is received directly from a stadium or venue and then handed off to the live streaming infrastructure. Everything from signal ingest, inspection, and conditioning to closed-captioning, graphics insertion, and ad management happens within these walls. By utilizing a hub-and-spoke model with highly redundant architectures, such as dual internet circuits and SMPTE 2022–7 seamless switching technologies, the BOC replaces direct, vulnerable paths from the venue to the live streaming pipeline, making each live event highly repeatable and far less dependent on the quirks of individual event locations.

Securing the Signal: Reliability from the Venue Before the BOC can work its magic, we have to guarantee the video and audio feeds actually survive the journey from the production site to our facility. To ensure absolute reliability from the venue, Netflix enforces strict specifications for live signal contribution.

For any show-critical feed, meaning the primary feed our members will watch live, we require three completely discrete transmission paths. We utilize a strict hierarchy of approved transmission methods, prioritizing dedicated video fiber and single-feed satellite links, followed by dedicated enterprise-grade internet and robust SRT contribution systems.

We don’t just rely on redundant transport lines; we require full hardware redundancy out of the production truck itself. This includes using separate router line cards and discrete transmission hardware to prevent any single point of failure. Furthermore, every single piece of transmission hardware at the venue must be powered by two discrete power sources, protected by uninterruptible power supply (UPS) batteries, and surge-conditioned.

Finally, before we ever go live to millions of viewers, our operators execute exhaustive “FACS/FAX” (facilities checks) testing during rehearsals and before every show. This involves running specialized Audio/Video sync tests, latency tests, and quality tests to guarantee perfect audio and video synchronization, validating closed captions, and touring the backup switcher inputs.

Building the Human Infrastructure: Building the human operational model to run a facility like the BOC didn’t happen overnight. For a platform scaling from its very first live comedy special to streaming over 400 global events a year, the operational strategy had to undergo a massive, multi-year evolution.

Phase 1: The “All-Hands” Engineering Era. In the earliest days of live streaming, there was no dedicated operations team or formal broadcast operations center. The software engineers who wrote the code and built the live-streaming infrastructure were the same people manually operating the events on launch night. Every show was an “all-hands-on-deck” scenario. While this raw, startup-style approach worked for initial milestones, having core developers manually set up and tear down software configurations for every single broadcast was fundamentally incapable of scaling.

Phase 2: The Shift to Specialized Engineering (SOEs and BOEs). To separate event execution from core software development, the operational model matured to introduce specialized engineering teams. First, the Streaming Operations Engineering (SOE) team was established. These are highly skilled streaming engineers whose sole focus is to configure the full event on the live pipeline and support it during the broadcast. By having SOEs act as the first line of escalation, the core software developers were freed up to focus on building new live-streaming pipeline features.

However, as the physical broadcast facilities grew, it became clear that supporting the streaming pipeline wasn’t enough; the physical broadcast hardware and facility workflows needed dedicated oversight too. To solve this, Broadcast Operations Engineers (BOEs) were introduced to work alongside the SOEs. The BOE acts as the primary escalation point for all physical broadcast facility and hardware issues, overseeing the operation of all shows during a given shift.

Phase 3: The “Co-Pilot” Control Room Model. With specialized engineers in place to handle the deep technical infrastructure, the day-to-day operation of the actual video and audio feeds was handed over to dedicated operators. Initially, the Broadcast Control Rooms were structured much like an airplane cockpit.

This approach utilized a “first and second captain” workflow, pairing two Broadcast Control Operators (BCOs) together to run a single event, functioning exactly like a pilot and co-pilot. This collaborative model allowed for intense focus and high-quality execution, making it the ideal setup for running just one or two live events per day. However, as the ambition grew to stream up to 10 concurrent events a day for massive global tournaments, a 1:1 scale of pairing operators simply required too much space and manpower. A new model had to be adopted.

Phase 4: The Transmission Operations Center (TOC) Fleet Model. To manage high-density event days and continuous tournament coverage, the workflow was completely reimagined with the launch of the Transmission Operations Center (TOC) model. Rather than treating every live broadcast as an isolated launch in its own room, the TOC treats live events like a fleet. It centralizes operations and distinctly separates the traditional broadcast functions from the streaming functions to maximize human efficiency.

The TOC model divides the labor across three highly specialized, tiered roles:

- Transmission Control Operator (TCO): The TCO is responsible for managing all inbound signals arriving from the event venues, such as fiber optic, SRT, and satellite feeds. They ensure these incoming feeds meet strict quality, latency, and operational thresholds. Thanks to centralized dashboarding, a single TCO can manage up to five events concurrently.

- Streaming Control Operator (SCO): While the TCO handles what comes in, the SCO manages what goes out. They oversee all outbound feeds, including the streams heading to the live streaming pipeline and any syndication feeds sent to third parties for commercial distribution. Like the TCOs, SCOs can manage up to five events concurrently.

- Broadcast Control Operator (BCO): With the inbound and outbound transmission mechanics handled by the broader TOC, the BCO is able to focus entirely on the creative and qualitative execution of the event. Operating on a strict 1:1 ratio (one operator per event), the BCO seamlessly switches between backup inbound feeds if an issue arises, ensures audio and video remain in perfect synchronization, and performs rigorous quality control. They also monitor critical metadata, such as closed captions and digital ad-insertion messages (SCTE), right before the final polished feed is handed into the live streaming pipeline.

The Big Bet Exception. While the fleet-style TOC model enables immense concurrency for daily programming, the most critical, high-visibility events, like major holiday football games, utilize a specialized Big Bet Model. For these flagship broadcasts, an entire Broadcast Operations Center is dedicated exclusively to a single event. This hyper-focused environment strips away the multi-event ratios, providing operators with advanced instrumentation and dedicated facility engineers to ensure the absolute highest level of reliability for events where failure is simply not an option.

Source: netflixtechblog.com