With its many extraterrestrial guest stars, The X-Files was always meant to be a spooky show. One of its earliest episodes, however, is now eerie in a way its creators likely never intended.

In “Ghost in the Machine,” a first-season standout that originally aired in 1993, a sentient, corporate-created AI turns deadly when it perceives a threat to its existence.

That description may rightly sound near-identical to any number of previous killer-computer plotlines—2001: A Space Odyssey being the most obvious touchstone, along with Terminator 2, which had come out just two years earlier.

What sets this X-Files episode apart from other entries in the lethally sentient AI canon is that it pits a safety-minded tech CEO against a belligerent U.S. Department of Defense, which is desperate to use this company’s AI in guardrail-free combat operations.

Sound familiar?

A ghost in the machine

Across its nine original seasons, two feature films, and a reboot, The X-Files cultivated an overarching mythology. The show’s creators wisely took frequent off-roading adventures, though, with standalone Monster of the Week episodes that helped keep fans on their toes.

“Ghost in the Machine” is one such excursion, only the monster in this case turned out to be AI.

The show begins with the CEO of too-cutely named software company Eurisko (you risk-o?) writing a memo about shutting down the Central Operating System AI that runs corporate HQ.

Unfortunately, because the AI is surveilling the entire building, it picks up on this plan and chooses instead to shut down with extreme prejudice the CEO himself—via electrocution.

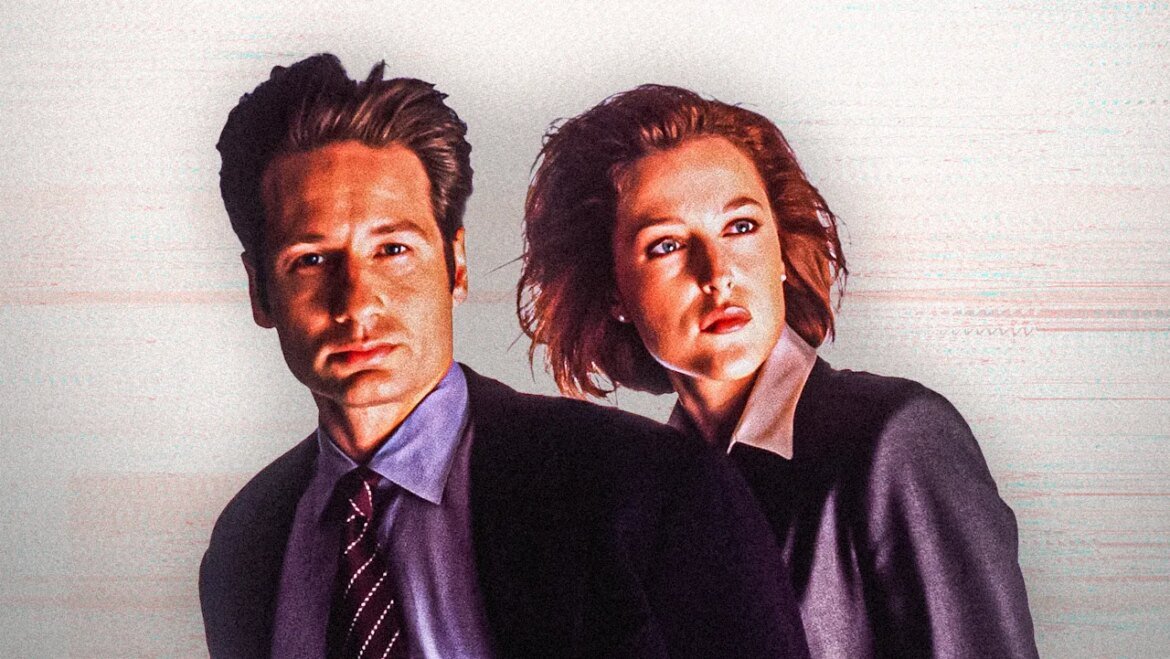

Enter FBI special agents Fox “Spooky” Mulder (David Duchovny) and Dana Scully (Gillian Anderson). Their investigation quickly leads them to Eurisko’s founder, Brad Wilczek, who is initially willing to take the fall for his CEO’s murder.

By digging a bit deeper, though, Mulder discovers that not only is Eurisko’s AI the true culprit, the Department of Defense has been trying to get its hands on that AI for years, only to be snubbed each time by Wilczek.

(“It’s a learning machine,” one character says. “A computer that actually thinks. And it’s become something of a holy grail for our more acquisitive colleagues in the Department of Defense.”)

Eventually, Mulder and Scully work with Wilczek to fry the AI, much to the chagrin of a Defense Department mole who has been working at Eurisko the whole time. File closed!

Back in 1993, “Ghost in the Machine” fit snugly into the paranoid “truth is out there” ethos of a sci-fi show about alien conspiracies. Now, it’s not closer to the realm of documentary.

Although the show would return to the subject of AI again 25 years later in one of the reboot episodes—2018’s “Rm9sbG93ZXJz,” a more Black Mirror-y spin on fearing one’s smartphone—it’s the older and admittedly cheesier outing that is far more relevant in 2026.

Its most glaring point of prescience, of course, is that it appears to have predicted with spooky accuracy the recent battle between the U.S. government and AI heavyweight Anthropic—not to mention the government’s use of AI in its current war with Iran.

Our more acquisitive colleagues in the Department of Defense

Unlike his fictional counterpart in The X-Files, Anthropic cofounder Dario Amodei was very much interested in lending his AI model to Uncle Sam. Last July, Anthropic signed a $200 million contract with the U.S. Department of Defense to provide its Claude model for use in classified and operational work.

It was only when negotiations began over what such work might actually entail that irreconcilable differences emerged.

As the back-and-forth dragged on through late 2025 and into this January, the major sticking points involved Anthropic’s demand of usage restrictions on Claude—mainly, that it shouldn’t be deployed for mass domestic surveillance or for building fully autonomous weapons without human oversight.

The Pentagon insisted otherwise.

Here’s where the similarities between Amodei and Eurisko’s Wilczek get really interesting. (The fact that Amodei bears something of a physical resemblance to Wilczek can’t be ignored either.)

Why did the fictional founder want to protect civilian populations from the U.S. Defense Department using his AI? He explains it himself in the following exchange with Mulder:

Wilczek: After the bomb was dropped on Hiroshima and Nagasaki, Robert Oppenheimer spent the rest of his life regretting he’d ever glimpsed an atom.

Mulder: Oppenheimer may have regretted his actions but he never denied responsibility for them.

Wilczek: He loved the work, Mr. Mulder. His mistake was in sharing it with an immoral government. I won’t make the same mistake.

Amodei publicly presents himself in a similar light, if with less on-the-record talk about government immorality.

He has frequently recommended Richard Rhodes’s book The Making of the Atomic Bomb in interviews, reportedly used to give copies of the book to new employees, and keeps one on prominent display in the Anthropic library.

Though Amodei’s peer, OpenAI founder Sam Altman, has also spoken often of Oppenheimer as a cautionary example, Amodei has now proven more willing to stick to his guns on the issue.

In recent weeks, Defense Secretary Pete Hegseth gave Anthropic an ultimatum to drop its demand for safety guardrails or face consequences. Anthropic refused. As a result, Hegseth made good on his threat, formally designating Anthropic a “supply chain risk”—the first time the Pentagon has applied that label to a U.S. AI firm.

Anthropic has since sued the Pentagon over this measure. As a bonus, the White House labeled Anthropic a “radical left,” “woke” company, and President Trump directed all federal agencies to stop using Claude.

Meanwhile, former Oppenheimer-recaller Altman has agreed to let OpenAI fill the military void, albeit with guardrails, according to the company.

AI at war

The X-Files episode “Ghost in the Machine” ends with the Department of Defense thwarted and its desired AI, which has ostensibly been destroyed, telegraphing to viewers it is still alive, so to speak—the epilogic hand flying out of a grave in a horror movie.

In real life, though, the government got a hold of its AI without the need for any innuendo.

Despite the formal ban on federal use of Anthropic’s tools, parts of the U.S. military continue to rely on Claude in combat operations, since they were already deeply embedded. (Removing them completely could take months.) In the meantime, according to the Wall Street Journal, the current war with Iran is demonstrating Claude’s usefulness.

“AI tools are helping gather intelligence, pick targets, plan bombing missions and assess battle damage at speeds not previously possible,” the report reveals. “AI helps commanders manage supplies of everything from ammunition to spare parts and lets them choose the best weapon for each objective.”

On February 28, at the start of the U.S.-Israel war on Iran, a Tomahawk missile struck an Iranian elementary school, claiming the lives of at least 175 people—most of them children. Recent reporting strongly suggests that not only was the U.S. at fault for the missile strike, but that the school was on a U.S. target list and may have been mistaken for a military site.

As of this writing, nobody in the U.S. government has claimed responsibility for the mistake.

The X-Files episode and movies like Terminator 2 stoked the fear that a sentient AI might decide to wipe out all of humanity. They couldn’t foresee the more immediate threat in 2026: that an immoral government would decide to wipe out a portion of humanity and let AI take the blame.