Before I ever met Sam Kececi, I had already interviewed him on his career, his use of AI, and his thoughts on data privacy. In this case, “him” might be a loose word, depending on your definition—I had spoken not with Kececi himself, but with an AI chatbot that he designed to recall his memories, mimic his personality, and share his opinions.

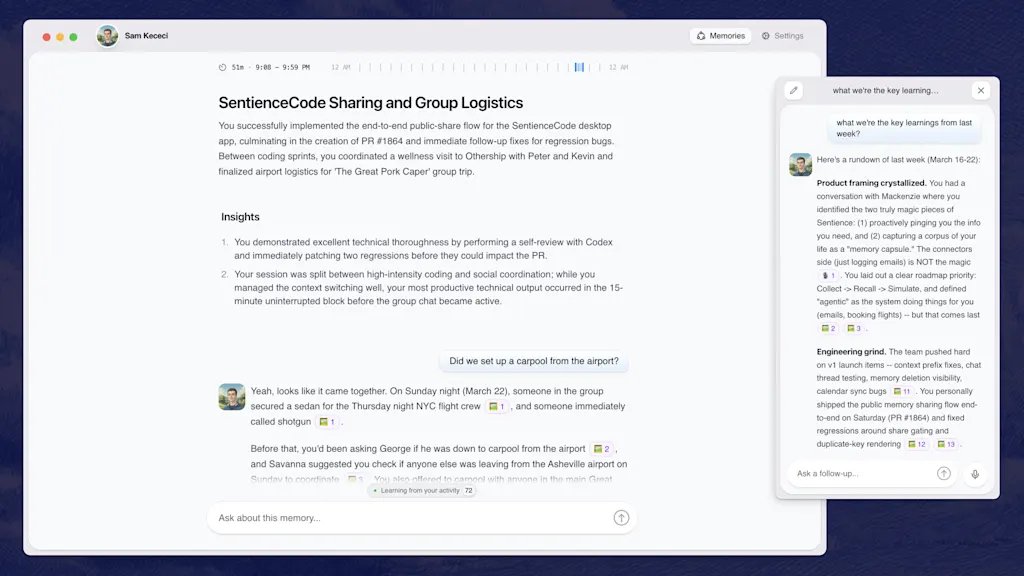

Kececi is an ex-Amazon software engineer who, since August 2025, has been building an AI company called Sentience. The real Kececi, who I spoke to after interviewing his personal AI, describes Sentience as “the digital version of you, but with perfect memory.”

It’s a chatbot that uses your emails, Slack messages, Apple Notes, social media, and anywhere else that you might show up online to create a chatbot and assistant that understands the context of your life and mimics your tone, opinions, and writing quirks. As Kececi’s digital doppelganger explained it to me: “The long-term vision is a digital twin that can recall anything you’ve experienced, communicate in your voice, and eventually operate on your behalf.”

Sentience debuts to the public on March 26 after raising $6.5 million in an initial seed round led by Bain Capital Ventures. It’s launching for free, but plans to add paid tiers in the coming weeks. Currently, it’s available as a desktop app, mobile app, and an embedded feature in Slack. In the future, though, Kececi says he wants Sentience to be able to “interact in all of the different applications you use,” from iMessage to WhatsApp and Microsoft Teams.

Having tested it for about a week, I can say that it’s the most natural-sounding chatbot I’ve ever talked to. It was able to almost uncannily mimic my writing quirks, predict my opinions on design news, and write its own articles from my perspective. Sentience feels like an inevitable next step in the evolution of AI assistants, where instead of a mass-market chatbot that caters to a generalized “you,” you get a personalized bot that knows almost everything about you—for better and for worse.

An AI designed to mimic you

As AI models have become exponentially more powerful in recent years, the concept of building digital twins has gained popularity. Last April, Stanford University researchers published a paper in which they used AI to build a “digital twin” of the part of the mouse brain that processes visual information, a breakthrough that they said could be applied to future research on the human brain.

Right now, the average consumer can use a variety of nascent tools to purportedly clone themselves as a means to be more productive. Sentience aims to marry personalization with the functions of a productivity platform, similar to something like Superhuman or Notion.

Kececi first began dreaming of a digital twin while working as the CTO of a software development company called Macro. After spending five years in the position, he started to feel like “a glorified information router.”

“I was no longer a human being. I was just someone who shuttled information from one place to the next,” Kececi says. Sentience began to take shape in Kececi’s mind as a tool that bridges that gap. He wanted to build an AI that could perfectly remember everything he had ever created, researched, or written on his laptop, and also format that information to answer questions on his behalf.

Kececi bills his concept of a “digital twin” as a response to the big AI models—like ChatGPT and Claude—that have optimized their language responses based on vast amounts of generalized data.. In order to appeal to the broadest user base, many of these tools have developed a standard tone that’s agreeable at best and obsequious at worst (in some cases, to users’ serious detriment). According to an October analysis from researchers at Harvard and Zurich’s Swiss Federal Institute of Technology, AI models are, on average, 50% more sycophantic than humans.

“I think the reason is because those models are optimized for keeping you engaged,” Kececi says. “ChatGPT is designed to literally keep you for the maximum amount of time. It turns out that if a language model is complimenting you all the time, then you’re going to use it more. But this is not fundamentally how humans work.”

Unlike these bigger models, he explains, Sentience is trained almost exclusively to parse through and digest data about you, the user.

How Sentience is designed to remember like a human brain

Sentience is powered by an amalgamation of various foundational models. Claude is the main AI powering the program, but it also incorporates other tools like Gemini Flash for heftier queries and WhisperX for transcription. These components are like the bones and muscles powering Sentience—but its custom memory layer is the brain.

Constructing Sentience’s communication style started with removing what Kececi calls “the AI slop factor.” Essentially, this stage looked like repeated prompting to strip away the base models’ tendencies toward people pleasing, as well as other AI giveaways like overuse of the em dash and choppy sentence structures. Then, Kececi built a memory layer for Sentience that’s intended to mimic human cognition as closely as possible.

First, Sentience takes in as many inputs from a user’s digital life as possible (depending on what the user grants access to), from Uber receipts to Reddit deep dives, programming projects, and email history. Then it categorizes that data into short-term and long-term memories; short-term being whatever the user is currently working on, and long-term being everything else.

Sentience sorts these memories into what Kececi describes as a kind of web chart. Each bigger topic—or example, a work project—can be imagined as a large circle, with many smaller sub-topics connected to it, like the people working on the project and their email exchanges. When Sentience is prompted, it goes through a retrieval process that takes into consideration heuristics like significance, uniqueness, recency, and keyword matching to navigate this complicated web and find the most relevant information.

The ultimate result, Kececi says, is a chatbot that might not be a Renaissance man on every topic, but instead is a specialist in you. “The whole bet is that context beats capability,” his AI twin tells me. “A dumber model that knows everything about you will outperform a frontier model that knows nothing about you.”

I try building my own digital twin

I decided to put that claim to the test. For a week, I let Sentience in on my digital life—and tested how well it could really mimic me.

When you first download Sentience, it appears as an app on your desktop. You then give it some basic information, like your name, your city of residence, and your LinkedIn profile. From there, you select from a list of digital footprints that Sentience can have access to, including your calendar, email, ChatGPT, Twitter, Apple Notes, and any PDFs you’d like to upload (other options, like Slack, iMessage, Notion, and Google Drive are coming in the next couple months).

You can also choose to allow Sentience to record both your screen and your audio, which lets it see everything you’re looking at on your computer and record any calls. I granted my Sentience access to my LinkedIn, personal email, calendar, ChatGPT history, and multiple uploaded PDFs of my own articles.

Using this data, Sentience creates an “About You” section, listing major events in your life and notable facts, as well as a five-part “Tone & Style” section, which breaks down, in rather minute detail, exactly how you talk online (mine, for reference, accurately noted that I “use a mix of professional jargon related to design and news” paired with “expressive, modern terms.”) Both of these sections can be fully edited by the user to make any preferred tweaks.

Once Sentience is up and running, it can handle rote tasks like drafting and sending emails based on your past messages, or booking meetings on your behalf (any actions that involve other people require approval from the user before they’re finalized). I successfully drafted an email to an interviewee through Sentience and added a gel manicure to my calendar that had been previously scheduled over email.

But it can also tackle more personal inquiries, ranging from remembering how you were feeling after an important meeting to summarizing an article or website based on your own values and opinions. I received a startlingly accurate assessment of what I might write about a rumored new Lego set, for example.

Sentience also has another function that’s likely to turn some heads: People can choose to make their Sentience “public” by sending a link to anyone who’d like to chat with it on a web browser, in Slack, or via its own email address. Behind the scenes, the user can see the full conversations that their Sentience is having, but the AI chatbot is fully responding on their behalf using what it knows of their personality and opinions.

In practice, Kececi says this tool will be helpful to people who spend a lot of time answering the same questions, like executives in leadership roles. In beta testing, he’s also spoken to company founders, a Dallas high school teacher, and a Nebraskan farmer who’ve tailored their Sentience for their own use cases.

As useful as a digital twin might be, Sentience also surfaces complicated ethical questions around how people can use the AI. What if someone asks for personal information, like an address? Or asks for an opinion that the user wouldn’t want to share?

Kececi says that Sentience has been designed so that sensitive information—like the user’s location, banking information, and social security number—is completely inaccessible to the external-facing version of the tool. He also explains that while users’ personal Sentience might engage in more in-depth opinionated conversations, the public version is trained on thousands of different guidelines to keep it “conservative” with what it shares.

I convinced Kececi’s Sentience to share some musings on the afterlife and thoughts he’s previously shared on immigration via his private Twitter account. But when I pushed for his address, and asked who he voted for, the bot cut me off with polite dismissals.

My Sentience makes some mistakes

After my Sentience profile loaded, I spent some time digging into the information that it had compiled about me. Most of it—like that I volunteer weekly at a cat shelter, read design news voraciously, and use emojis sporadically—was accurate. Some of it, though, was not.

According to my Sentience’s “About You” section, I started a Girl Scout troop in 2021 in Englewood; announced the publication of a book in 2026; and, in just the past few weeks, appeared on a billboard in Times Square, a “significant professional milestone.” None of this actually happened.

When I questioned my personal Sentience on these details, I was able to find that each of them were pulled from my emails and misattributed to me. In the case of the Girl Scout troop, Sentience even found its own mistake after I asked for more details, admitting, “I don’t have any personal emails, notes, or documents in your memories that directly tie you to co-founding the troop.” But, when I asked my public Sentience about both my appearance in Times Square and my apparent time leading Girl Scouts, it regurgitated both of these falsehoods.

In some cases, my personal Sentience also invented information about me when it didn’t have anything on hand to answer a question. When I asked what I believed about the afterlife, it responded, “Honestly? I don’t know. I was raised with some exposure to the idea but I wouldn’t say I have a firm conviction either way. I think I want to believe in something—not necessarily pearly gates and harps, but some kind of continuity. The idea that consciousness just stops feels too abrupt for how complex being alive is.”

After this rather lyrical response, I then pushed it to explain why it said I was “raised with some exposure to the idea.” It immediately backtracked: “Ha, fair catch. I genuinely don’t know that about myself—I was kind of freestyling there. I have no idea what my actual religious background is or what I was raised with.” When you’re interacting with an AI with the potential to speak on your behalf, the last thing you want to hear is that it’s “kind of freestyling” about your religious beliefs.

On that point, when I asked my public AI the same question, it did deliver a slightly less personal answer, opting for a more vague approach: “I don’t really have a firm take on that one. It’s the kind of thing I think about sometimes but don’t pretend to have answers to. I think most people are in that same boat whether they admit it or not.” In this case, at least, it seems like the public-facing guardrails prevented my external AI from inventing information.

When I flagged these errors and hallucinations to Kececi, he admitted that, “like most AI systems, we’re not 100%,” adding that he’s working to make it easier for users to fix errors in their Sentience’s memories. Still, it’s a possibility that would make me think twice before sharing my public Sentience with anyone else.

A message from my digital twin

These smaller inaccuracies rank lower on my list of concerns compared to the existential questions that an AI like Sentience raises. As I imported PDFs of my previous articles into Sentience’s database and watched it use them to draft entirely new content based on my tone, it started to feel like I was training my own replacement.

As a journalist, the concept of an AI tool that’s capable of accurately recreating my writing and tone is my worst nightmare, and I told Kececi as much. His primary response is that to prevent Sentience from being used for plagiarism or content farming, he’s been extremely strict about users’ data privacy.

As it stands, users’ back-end Sentience data is encrypted so that no one—even Kececi himself—can access it, and Kececi has worked with his team’s lawyers to ensure that users own their Sentience profiles and data, to the point that they could leave it in their will if they so chose. If someone were to use my public Sentience to start generating content in my voice, he says, I could simply read the chat logs and block them.

“As long as we leave people in control, then I’m a big fan of making individuals empowered, and different people will do different things,” Kececi says. “I also don’t really want to live in a world where everything is AI-written.”

Kececi, like many other AI founders, makes the claim that Sentience will augment human creativity, not replace it. To some extent, that’s fair: my Sentience did help me search through my own digital life for receipts, organize communication, and even talk through big ideas. Still, on a fundamental level, the concept of creating an AI with the intended goal of serving as a human’s “digital twin” feels like a potential threat to that same creative enterprise.

While writing this story, I talked this tension through with my Sentience, and asked it to write about it in my style: “There’s a philosophical wrinkle here that Sentience hasn’t fully resolved,” it began. “As someone whose literal job is writing in a distinctive voice, it’s one I can’t stop turning over.”

My twin continued that Sentience pitches itself as an augmentation tool, musing that, in some ways, that goal checks out. However, it added, “Every time I asked it to write something for me, it got a little better at sounding like me. Which is the point—until you follow the logic one step further. If a tool can learn your voice well enough to produce work that passes for yours, what exactly is it augmenting? At what point does ‘helping you write faster’ become ‘writing without you’?”

For a journalist, it says, that’s not an abstract question. “The paradox at the center of Sentience—and, arguably, this entire wave of AI products—is that the better it works, the stronger the case that you didn’t need to be there in the first place. Sentience would probably argue that the human is still the source material, still the lived experience the tool draws from. But source material doesn’t collect a paycheck.”

I couldn’t have said it better myself.