On February 27, an AI-generated image appeared on Instagram purporting to show heavy military equipment stationed inside Karimian Elementary School in Isfahan, Iran. The post, shared by accounts including the Free Union of Iranian Workers, an independent labor union operating inside Iran whose leaders have been jailed by the regime, read: “This is not a military zone! It’s Karimian Elementary.” The image carried a visible Google Gemini watermark, indicating that it had been created by the software. The school posted a rebuttal, noting that the equipment could not physically fit on the premises. Iranian-diaspora fact-checkers confirmed that the image was fabricated.

The next day, Shajareh Tayyebeh, a girls’ elementary school in the southern city of Minab, was hit in the first wave of strikes on Iran. Iranian authorities reported at least 175 people dead, many of them children. The exact death toll has not been independently confirmed, but a New York Times investigation verified that the school had been hit by a precision strike at the same time as attacks on an adjacent naval base, and a preliminary investigation by the American military concluded that U.S. forces were most likely responsible. The school sat on the grounds of the Iranian navy’s Asef Brigade barracks, an active military base. The building had been converted from military use, and served children from military and civilian families.

In short: The day before the strikes began, an AI image on social media planted the notion that the regime hides military equipment in schools. The next day, a real school—once part of a military compound but walled off from it since 2016, according to Human Rights Watch—was destroyed. The fake was wrong about Karimian, but by the time the Minab strike happened, audiences were primed to believe that a school was a legitimate military target, not the site of a civilian catastrophe. Layer by layer, an accumulation of AI imagery circulated on social media that made it difficult to establish what happened to these children.

This is the fog that AI has introduced to the war in Iran. This isn’t a war where AI fakes fool everyone nor where detection tools catch everything. We live in a world where real photographs of real civilian deaths are called fake, and where fake images are used to illustrate real deaths. Where correct identification of one fake image is used to cast doubt on real images, where incorrect detection is authoritative, and where all of it happens faster than any institution, newsroom, fact-checker, photo wire service, or platform can process. The fog of AI does not need every piece of content to be fabricated. It needs the question Is this real? to become close to unanswerable.

When video of the Minab devastation circulated, claims spread on X, Telegram, and Instagram that the footage was actually from Peshawar, Pakistan. Fact-checkers intervened, this time to defend the authenticity of real footage, having debunked the fake imagery about a different school the day before. Users on X, many of them diaspora accounts opposed to the regime, claimed that the footage depicted the May 2021 bombing of the Sayed ul-Shuhada school in Kabul. Another user asked Grok to verify the post and Grok agreed with the false claim, citing The New York Times, the Guardian, Al Jazeera, and Wikipedia as sources even though they contained images directly contradicting it. Then open-source intelligence analysts geolocated the footage to coordinates matching the school. Grok was not simply wrong; it was confidently wrong. Asked to verify a real video, the AI confirmed a false claim that it supported with fabricated citations, giving denialism machine authority.

[Ali Breland: Dubai’s army of influencers gets back in line]

Meanwhile, Iran undermined the documentation of the tragedy. The Iranian embassy in Austria denounced the Minab strike and accused Europe of complicity in the “death of our collective soul.” The post included a photograph of a child’s pink backpack covered in blood and dust. SynthID, Google’s watermarking tool, confirmed that the image had been generated by Google’s AI. The regime illustrated the deaths of real children with a fabricated image. The identification of that fake photo now furnishes an alibi for those who want to deny the real bombing.

The Iranian regime has long dismissed evidence of its violence and crimes by calling the documentation fabricated, staged, and foreign produced. Now a similar accusatory reflex has migrated to opposition media and diaspora accounts. Yet children were killed, even if there is false propaganda about their deaths. That the regime has an interest in publicizing these deaths does not mean that the deaths did not happen.

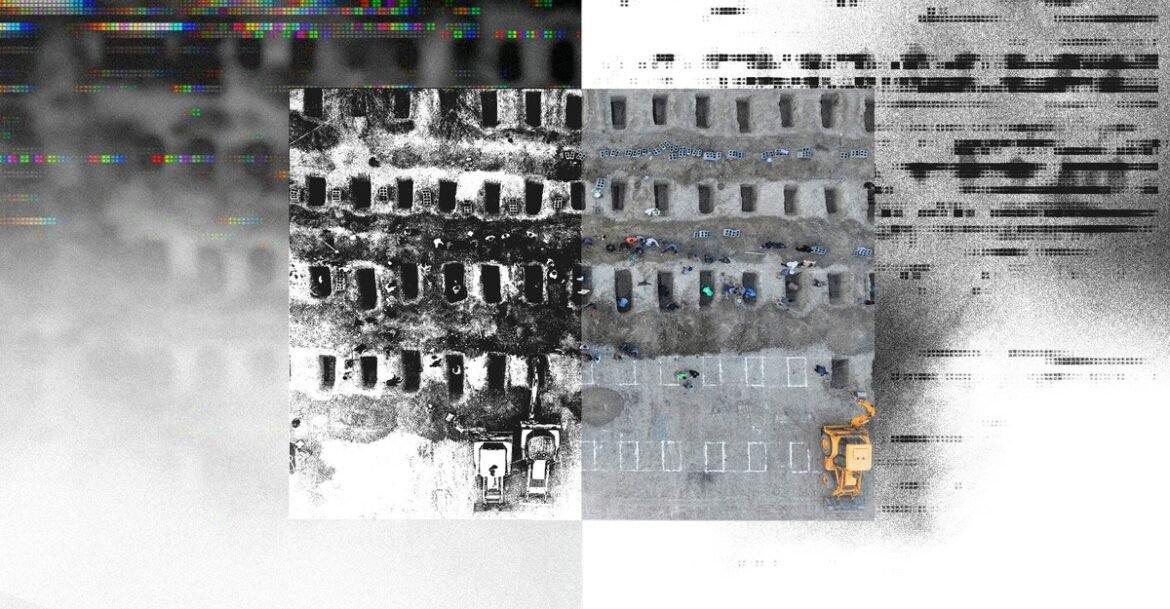

Mourners in Minab buried the schoolgirls and staff on March 3. Iran’s foreign minister, Abbas Araghchi, posted a photograph on X of the burial site that was viewed 3 million times. Within hours, a diaspora account claimed that the image had been recycled from a Jakarta cemetery where COVID victims were buried in July 2021. The claim named the cemetery, the date, and the photographer, but none of that information was supported by reverse image search, metadata analysis, or other fact-checking. A verified account posted a “claim versus fact” graphic that said: “Iran releases AI altered photo of graves being dug for 160 girls.” At the same time, an account calling for “transparent investigation to ensure accountability” illustrated the real tragedy with an AI-generated image of parents mourning over shrouded bodies, further contaminating the evidentiary record that the post was trying to defend. The New York Times visual-investigations team geolocated the burial site to Minab’s Hermud Cemetery. Satellite imagery showed that the graves were dug on Monday in a previously untouched section of ground, consistent with a Saturday bombing and a Tuesday funeral. A New York Times journalist noted on X that the image was not AI generated.

To learn that the regime staged an elaborate, televised funeral for children killed by foreign strikes produces in many Iranians, inside and outside the country, a rage that I understand. The protests that started on December 28, 2025, and reached their intensity on January 8 and 9, were answered with what is thought to have been massacres of thousands of protesters, including children. Parents went through great pains to retrieve their children’s bodies. When bodies were returned, families were sometimes asked to pay exorbitant fees, to agree to conditions denying burials or dignified funerals, or forced to concede that the dead were members of the security forces and had been killed by “terrorists.”

But the resentment at the regime’s selective grief does not make the graves empty. It does not make the children un-real. And it does not justify dismissing evidence of their deaths with a two-letter accusation: “AI.”

Both formulations, the denials of the bombing and the uses of the bombing for propaganda, begin and end in the same place: Evidence has ceased to function as it should.

[Jonathan Lemire: Trump isn’t even trying to sell this war]

One hundred seventy-five people were reportedly buried in Minab, most of them children. Nearly every actor in this conflict, from every direction, has made it difficult to establish that these children lived, that they were killed, and that someone is responsible. In Minab, the fact of these children’s deaths has been documented, verified, and geolocated. None of it has been enough to prevent the doubt from spreading faster than the evidence.